Your AI Coding Agent Reads 166 Tokens for Every 1 It Writes. Here's Why That's a Problem.

For every 1 token your AI writes, it reads 166. That 165:1 ratio explains why AI coding is expensive, slow, and hitting limits constantly. Here's the data.

49 posts published

For every 1 token your AI writes, it reads 166. That 165:1 ratio explains why AI coding is expensive, slow, and hitting limits constantly. Here's the data.

Claude locks you out for 5 hours and you barely sent any messages. The real reason: your AI agent silently consumed 100,000+ tokens reading files you didn't ask it to read.

Claude Code compacts your session and suddenly forgets which files it modified, what errors it found, and what it was working on. Here's the technical explanation of why — and how to prevent it.

Even at $200/month, Claude Max 20x users report their session draining from 21% to 100% on a single prompt. The problem isn't the plan — it's what's consuming your tokens.

Your Claude Pro subscription burns through its 5-hour session limit in minutes. Here's the technical reason: your AI agent wastes 70% of your tokens reading files instead of answering your question.

When Claude Code shows 'context left until auto-compact: 0%', it's about to summarize everything and throw away the details. Here's exactly what gets lost and why it matters.

Research tested 18 frontier models. Every single one gets worse as input length increases. This phenomenon — context rot — is why your AI coding assistant degrades mid-session.

Every AI coding assistant has a fixed context window — the maximum information it can hold at once. Here's what happens step by step when that window fills up, and why bigger windows don't fix the problem.

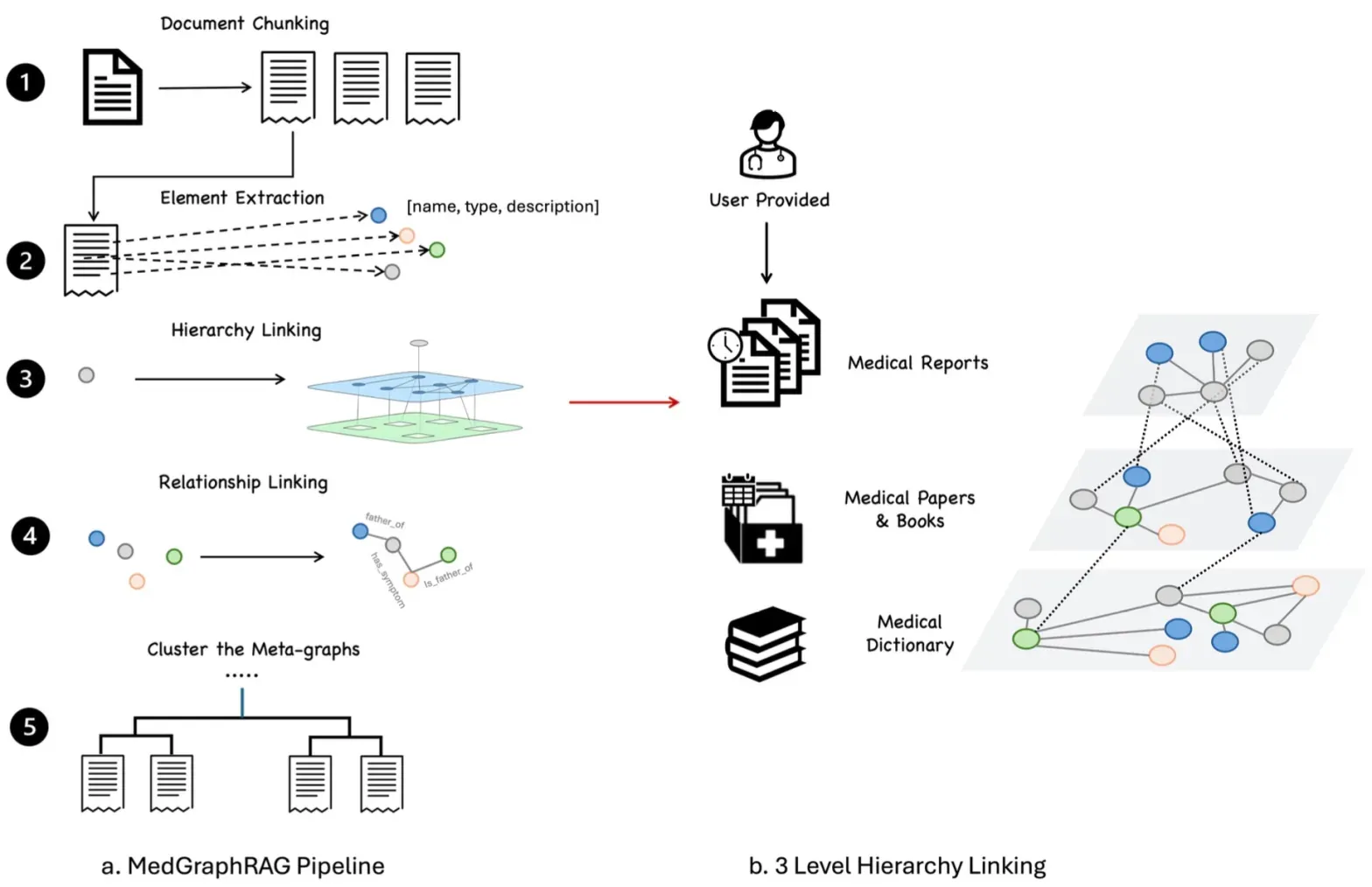

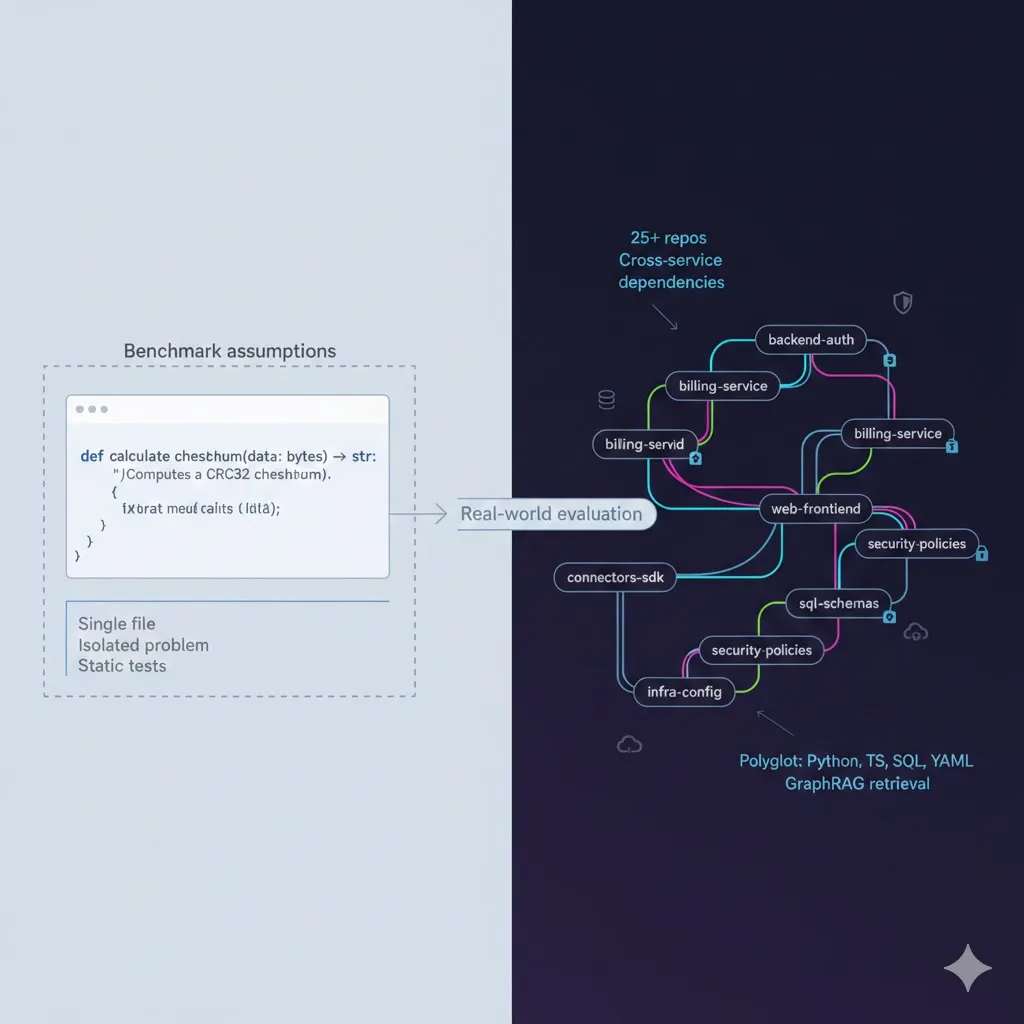

Individual layers may pass, but systems often fail at the seams. This blog details how to conduct holistic 'System-in-the-Loop' tests, measuring how retrieval noise compounds into generation errors across 25+ repositories. We provide a blueprint for evaluating the full journey from a vague natural language query to a multi-repo pull request.

A technical deep-dive into LLM context management, the computational limits of context windows, and why every AI tool's 'context graph' solution might just be clever marketing around semantic search and RAG.

Claude Code, Cursor, Copilot, Codex — they all share the same fundamental flaw: no persistent memory, brute-force file reading, and context that fills up and gets thrown away. Here's what none of them admit.

Retrieving nodes is only half the battle; the LLM must synthesize code that adheres to cross-repo constraints. This post explores measuring faithfulness, checking execution-level correctness against internal SDKs, and using LLM-as-a-Judge to verify that generated code respects the security and type contracts of separate repositories.

A developer tracked every token Claude Code consumed for a month. The result: 99.4% were input tokens. For every 1 token written, 166 were consumed reading. Here's what that means for your bill.

You're not imagining it. Research tested 18 frontier models and every single one degrades as the conversation gets longer. Here's the science behind it.

Needle in a Haystack tests one thing. RULER tests another. MRCR tests yet another. Here's what each benchmark actually measures and what it misses.

A 20-turn conversation costs quadratically more than you'd expect. Here are the exact formulas for multi-turn conversation costs across providers.

Training data is everything the model learned. The context window is what it's thinking about right now. Here's why the distinction matters.

The context window is your AI's short-term memory. It's not storage — it's a fixed-size desk. Everything the AI is thinking about right now must fit on this desk.

Three competing paradigms: grow context via hardware, replace attention with O(n) alternatives like Mamba, or build external memory systems. Which will win?

Flash Attention never materializes the full n×n attention matrix. Instead, it computes in tiles using fast GPU SRAM. Here's how it works and why it's 2-4× faster.

Total GPU memory = model weights + KV cache + activations + workspace. Here's the exact formula to compute maximum context length for any GPU configuration.

Google's Infini-Attention combines standard attention with a compressive memory that persists across segments — enabling theoretically infinite context at O(1) memory.

There are fundamental limits on how much information a fixed-width attention mechanism can extract from n tokens. Here's the math from Shannon's channel capacity to attention bounds.

During inference, the model stores Key and Value vectors for every token. This KV cache is often the biggest memory consumer. Here's the math behind it.

Research proved that LLMs attend strongly to the beginning and end of context but poorly to the middle. This is a mathematical property, not a bug.

The exact formula for KV cache memory and worked examples for every major model architecture. Calculate your GPU requirements precisely.

Standard multi-head attention uses separate K and V for each head. MQA and GQA share them — reducing KV cache dramatically with minimal quality loss.

Transformers have no inherent notion of order. RoPE encodes relative positions via rotation matrices. Here's the full math from sinusoidal to NTK-aware scaling.

Before the AI generates its first word, it must process EVERY token in the context. Here's why time-to-first-token increases with context length.

Prompt caching saves 90% on repeated context. For coding agents carrying 20-40K tokens of system prompts, the savings are enormous.

Self-attention computes all pairwise interactions between tokens. For n tokens, that's n² computations. Here's the full mathematical derivation.

Context compaction is a lossy compression problem. Rate-distortion theory gives the theoretical lower bound on how much conversation history can be compressed.

When a single GPU can't hold the KV cache, you distribute the sequence across multiple GPUs. Here's how ring attention enables million-token contexts.

Similar to Kaplan's scaling laws for model size, there are scaling laws for context length. Performance doesn't scale linearly — here's the math.

RoPE is used by virtually every modern LLM. Here's the complete derivation from first principles, proof of the relative position property, and NTK-aware scaling.

As context grows, softmax normalizes attention weights so each relevant token gets less attention. This mathematical property is why AI accuracy drops with length.

4K vs 32K vs 128K vs 200K vs 1M — what do these numbers actually mean for your experience? Bigger isn't always better.

Multiple research approaches attack the quadratic bottleneck: Longformer, Reformer, Linformer, and linear attention. Here's the math behind each one.

AI doesn't read words — it reads tokens. Learn how tokenization works, why code costs more than prose, and how to calculate your token usage.

The attention mechanism is the beating heart of every LLM. Here's how it decides which parts of your conversation matter most — explained with analogies before equations.

When you upload a PDF or code file, it gets converted to tokens and placed directly into the context window — consuming the same limited space as your messages.

AI models are stateless. Each conversation is a fresh start. There's no database storing what you said yesterday. Here's why — and what it means for you.

If context windows were infinite, free, and didn't degrade, we wouldn't need RAG. Here's why retrieval-augmented generation exists and when to use it.

Anthropic just throttled Claude Opus and Sonnet during peak hours. Developers are canceling subscriptions and looking for alternatives. Here's the argument: a good open-source model with great context beats a frontier model that won't let you use it.

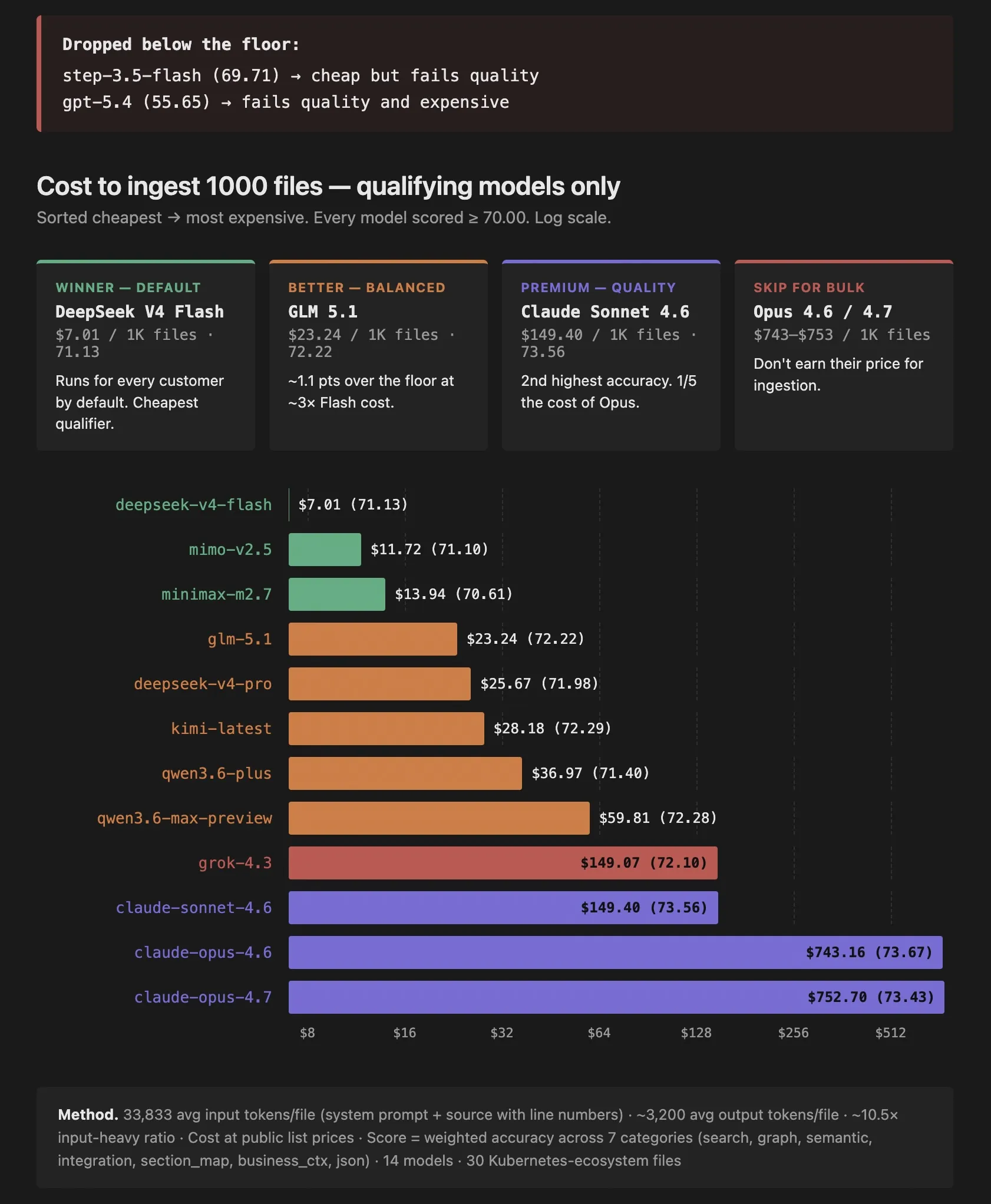

A real cost-vs-accuracy benchmark of DeepSeek, GLM, Kimi, Qwen, Sonnet, Opus, and GPT-5 for indexing codebases. Why per-file LLM analysis isn't actually expensive if you pick the right model.

Vector embeddings treat code like english prose. AST parsers see structure but not meaning. Raw LLMs forget everything every session. Here is why the LLM compiler pattern with a persistent semantic graph is the only approach that actually works for cross-repository code intelligence, and why open source models at $7 per 1000 files make it practical today.

Anthropic has erased $1 trillion in SaaS market cap by absorbing entire product categories — Cowork ate project management, Design ate prototyping, Managed Agents ate orchestration. So why won't they build ByteBell? Because a model-agnostic, self-hosted knowledge graph directly conflicts with their business model. Here is the full kill list and the honest probability analysis.

Anthropic shipped Claude Code, an MCP standard, and a frontier model, and then they deliberately stopped. This post maps the 5 adjacent categories Anthropic chose not to build, why they made that choice, and what it means for anyone building infrastructure underneath their agents.

A comprehensive map of the open source tools providing context to AI coding agents in 2026, grouped by underlying technical approach (vector embeddings, AST parsing, compiler indexers, and LLM-generated metadata), with an honest analysis of where each one wins and where ByteBell's cross-repo, on-prem, business-context graph is the right answer.